As a fan of both open data and ROH I was excited to find the article promising that the ROH’s data might be opened. However, this article was published in 2014 and the only open data about ROH that I could find was the Royal Opera House Collections Database. Its format is far from being user-friendly, i.e. there are no cleaned csv (or even xlsx) files, but luckily the structure of the entire website is fairly predictable. This meant I managed to write a basic scraper to extract the high level performance data. Unfortunately the database only contained data for the years 1946-2012.

The result is this interactive dashboard created in Tableau:

Highlights:

-

The number of performances in 1993 (159) started to approach the peak previously reached in 1951 (168 performances).

1998 and 1999 were years when ROH was being reconstructed.

The matinees started to become more popular in the noughties.

1968 was the last year of performances as the Covent Garden Opera Company.

Tosca was the most popular opera in the studied period with 187 performances.

La bohème was the most popular matinee.

The Kirov Opera was the third most popular company performing at the ROH with 33 appearances. After the name change to Mariinsky Theatre, there were additional four performances by this company.

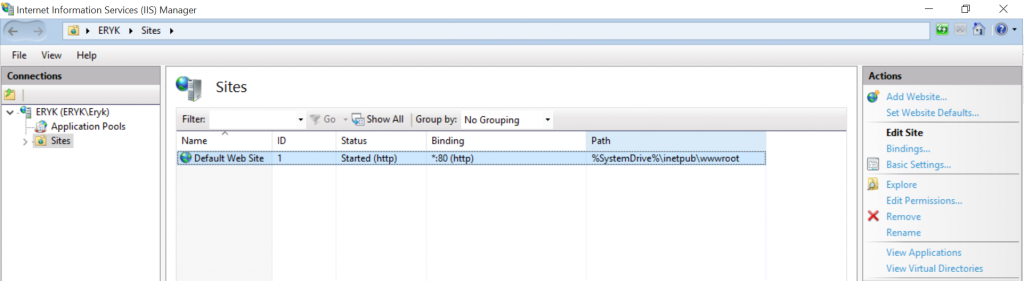

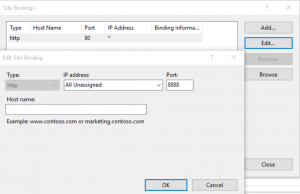

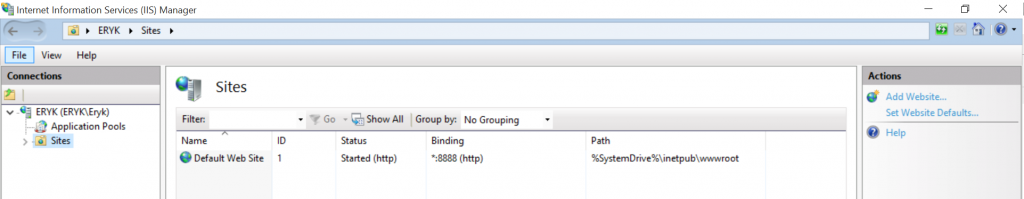

Here’s the scraper code: